What Is GEO (Generative Engine Optimization)?

Why This Matters If You Own—or Plan to Build—a Website

If you're here, you've probably asked yourself at least one of these questions.

What Is SEO and Why Does It Exist?

Who This Article Is For

This article is split into four sections, written for four different types of readers.

You can read it start to finish, or jump straight to the part that's relevant to you.

- Section 1 — Want to understand how SEO was born and why it exists? Start here.

- Section 2 — You've got a website, but tech isn't your thing? This section is written for you.

- Section 3 — You're an intermediate user or a web designer just getting started? You'll find practical, actionable concepts here.

- Section 4 — Already working with JSON-LD, structured data, and advanced architectures? Jump straight to the final section.

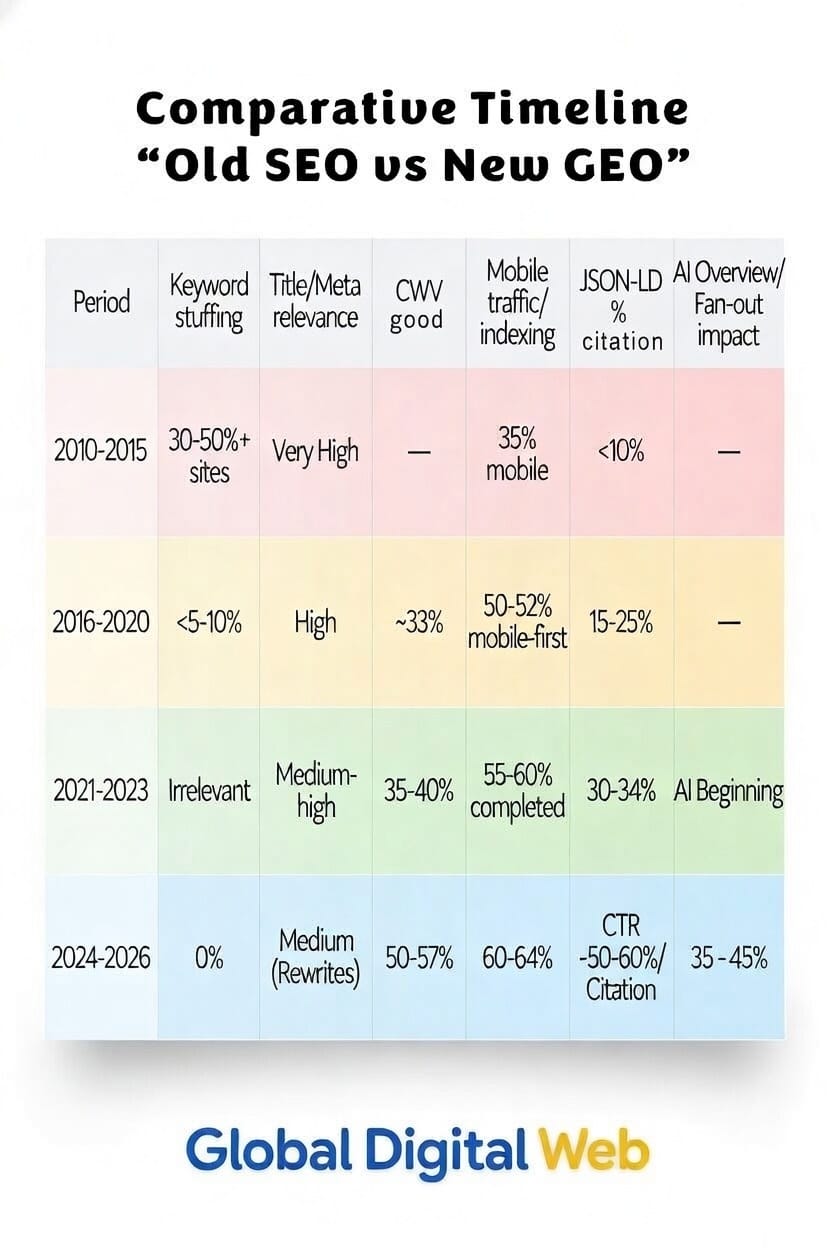

I'll start with a brief history of how the need for SEO emerged and why Google kept adding new rules to increase the chances that the system would consider sites trustworthy in the ranking process.

In Section 2, I'll speak to folks for whom tech feels overwhelming—but who still need to grasp the basics because they've got a website and want it to succeed for their business. I'll stick to dead-simple examples to help them understand what really matters.

If you're an intermediate user or a web designer just starting out, skip to Section 3, where I explain in more detail the importance of E-E-A-T when building a site, and how you can achieve it with solid SEO paired with structured data schemas—even using plugins if you're not yet comfortable writing them by hand.

Finally, if you're a fellow expert who hand-codes your own JSON-LD or generates it dynamically via PHP, jump straight to Section 4, where you'll find more technical language that'll help you understand where the future of SEO is headed with the implementation of AI-specific attributes.

Who's Writing This?

Before I explain how SEO was born and why it evolved, let me introduce myself briefly.

My name is Roberto Bianchi, and back in 1997 I was one of the first people in the world using JavaScript to bring websites to life. At the time, I was living in Finland. A site I built back then caught the attention of KONE Oy (which became KONE Corporation in 2005), and they offered me a position as head of the company's worldwide intranet—at the time connected to several thousand terminals around the globe.

Before long, I became the system administrator for the platform the company used to manage industrial designs.

At the same time, I also became an internal trainer. Between 1998 and 2002, I traveled the world for KONE: Italy, France, Finland, and the US.

I've been living back in Italy for a few years now:

- In 2022 I earned my TEFL OFQUAL Level 5 teaching certification

- In 2024 I opened my own company, Global Digital Web di Roberto Bianchi—I offer consulting, build websites (only for clients I carefully select), and teach technology in English for the European Union's Erasmus+ program

Titles aside, I’ve always been genuinely passionate about technology. And I feel like I can offer some solid advice to anyone who wants to know more.

The History of SEO

The World Before Google: AltaVista and Netscape

Let's start in 1997, when Google didn't exist yet.

Back then, the internet landscape looked very different from today:

- The go-to search engine was called AltaVista

- The most popular browser was called Netscape

- Web pages were written by hand only—no drag and drop

Meanwhile, between 1996 and 1997, two guys were laying the groundwork for what would become the most powerful search engine in history: Larry Page and Sergey Brin, building it at Stanford.

The Birth of SEO: How It All Started

Right around 1997, someone coined the term SEO—it basically meant "let's learn how to make our site show up better when people search for something."

(source: The history of SEO)

To get found on AltaVista, there were several tricks. The most common was writing your most important keywords 1,000 or even 2,000 times in white text on a white background, shrunk down to 1 pixel. Imagine writing your store's name a thousand times on a white piece of paper with a white marker. People who walk into the store can't see anything—but the ranking system could, and it put you at the top of the list. Visitors to the site saw nothing. But AltaVista ranked you first.

Warning! These were tricks from 30 years ago. Today they're considered black hat practices and get penalized.

In 1998, while I was working at KONE, I still remember my colleague Jorma Heimonen—the resident genius at the time—who said to me:

"Roberto, have you tried using Google instead of AltaVista? Did you know they even give you a free email account?"

I still use that email account today.

Here's a screenshot of Google in 1999 when it was still in BETA.

Google's First "Roadblocks": Stop Playing Games

Google was growing fast. On November 16, 2003, the first major update arrived.

Something worth knowing: Google has a habit of giving code names to its updates—often names of animals, places, or seasons. Kind of like how meteorologists name hurricanes. Whenever you hear one of these names, you know Google has changed the rules of the game.

The first one was called Florida, and the message was clear: "Stop playing games!" All the sites using the old tricks disappeared from the first page.

It was a shock: tons of webmasters woke up to find their sites had vanished into thin air.

The response from many? Move the keywords into the metadata—a kind of invisible label in the page header that visitors never see but search engines definitely read. The thinking was: "If I hide them better, it'll still work."

But that got abused too with what's called keyword stuffing—cramming these tags with keywords repeated endlessly.

Imagine opening a restaurant menu and finding the word "pizza" written four hundred times. You'd immediately know something's fishy—and Google learned to spot that too.

In September 2009, Google closed that chapter for good: «We no longer use meta keywords for ranking: we've been ignoring them for years because they were abused.»

(source: Google Does Not Use the Keywords Meta Tag) And Google didn't stop there.

Panda, Hummingbird, and the Quality Years

In February 2011, Panda arrived—a filter that had no mercy for sites with short, copied, or advertorial-only content. It wanted real, useful, well-written content.

Panda was basically a quality check. If your content was thin or copied, you got hit.

Anyone running content farms saw their rankings collapse.

But this wasn't enough for Google, so in September 2013 came the hummingbird. With this update, Google stopped looking for isolated words and started understanding the intent behind the question.

Before, it was like a librarian searching for books only by exact title. After Hummingbird, it was like a librarian who understands what you actually want to read.

In practice: you could finally talk to Google like you'd talk to a person, not like you're filling out a form.

A curious detail: Hummingbird had actually been running quietly since August 20, but Google only announced it officially a month later—and in the meantime, nobody had noticed.

If you searched for "how to make a fluffy chocolate cake," it no longer returned random ingredient lists: it understood you wanted a good, easy recipe. That's when writing complete, natural sentences with logically ordered headings became important.

And while everyone scrambled to adapt, Google was already preparing the next move.

(source: Google Hummingbird)

2015: Mobilegeddon Arrives—Does Your Site Work on Mobile?

On April 21, 2015, one of the most important changes in SEO history arrived: the infamous Mobilegeddon.

Google decreed that a site must be comfortable and usable on phones too—especially on phones. If you opened a site on mobile and found tiny text or slow pages, Google drastically reduced its visibility in search results.

If your site didn’t work well on phones, your visibility dropped—period. Those who didn't comply lost customers—and Google dropped them in the rankings.

Ever heard the word "responsive"? It means a site that adapts perfectly to phones, tablets, and PCs, like in the example photo.

Today, a non-responsive site signals skills that aren't aligned with the minimum standards of modern web. Be careful with the “my nephew builds websites” for €300 approach. A cheap site can cost you far more in visibility. With the mobile problem solved, Google asked itself an even tougher question: "Okay, the site looks good on phones—but does whoever wrote it actually know what they're talking about?"

E-E-A-T and Helpful Content: Google Likes Professionals Who Actually Know Their Stuff

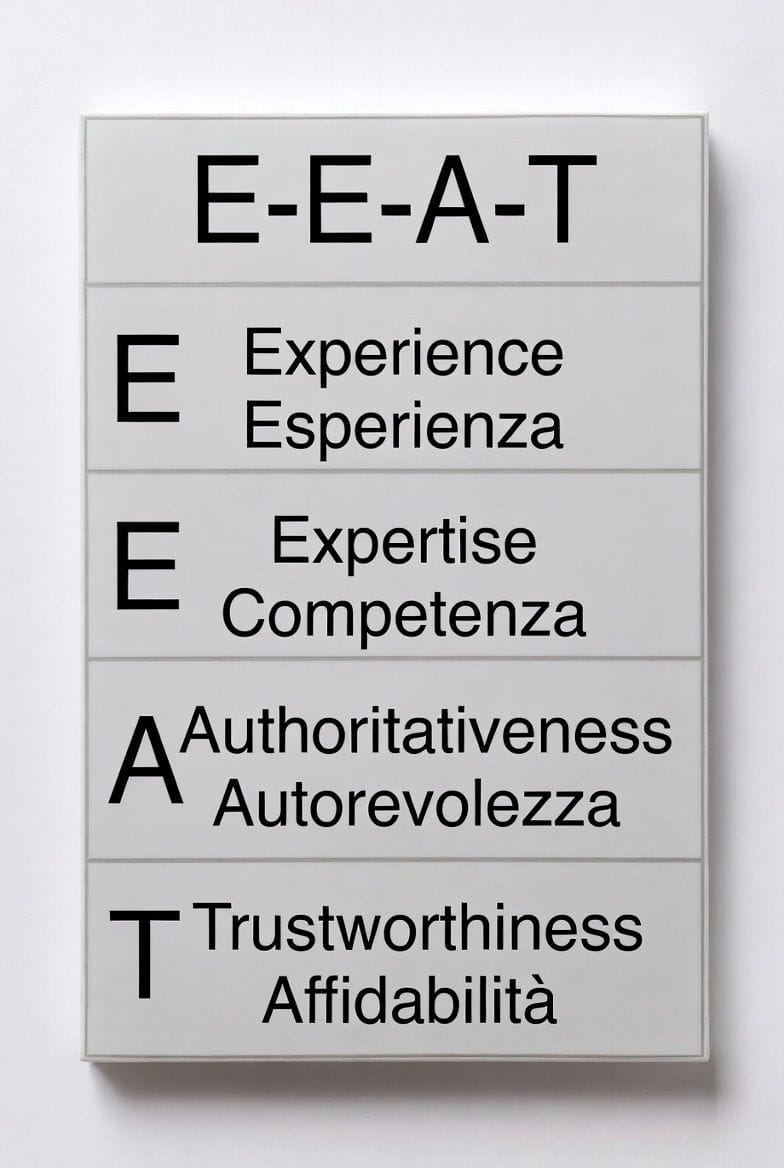

In August 2018, an update nicknamed the Medic Update arrived—so named because it hit medical and health sites hard that were giving advice without having the expertise to do so. This was the moment when Google started considering those who demonstrated real expertise more trustworthy. That concept has a name today: E-E-A-T. Think about when you're looking for a doctor. You don't trust the first person you pass on the street—you want to know where they studied, how many years of experience they have, what other patients say. Google evaluates websites the same way. Source: Creating Helpful Content

Google thinks the same way about websites.

| Letter | Meaning | In plain English |

| E | Experience | Have you personally lived what you're talking about? |

| E | Expertise | Are you actually knowledgeable on the topic? |

| A | Authoritativeness | Do others recognize you as an expert? |

| T | Trustworthiness | Can people trust what you write? |

The full acronym with the double E arrived in December 2022, but the idea was already there in 2018—and Google had been working on it quietly in their internal guidelines since 2014.

The Moral of the Story

So now you see why SEO exists? On one hand, it pushes people to produce higher and higher quality content. On the other, it weeds out those who exploit AI to crank out copy-paste sites on the fly, thinking they're webmasters.

In 1997, you could cheat with cheap tricks. Today? No way. No more shortcuts.

What does the near future hold? I'll talk about that below—in simple, intermediate, or expert-colleague versions.

For Non-Technical Folks: What Is GEO and Why It's Changing the Future of Search

But… You’ve Been Using It All Along

"Siri, where can I find a seafood restaurant nearby with good reviews?" "Hey Google, which pharmacies are open right now?"

Remember the first time you asked a question like that?

Even though AI exploded into the mainstream between late 2022 and 2023 with ChatGPT, we'd been using it for years without realizing it.

Why does this matter if you run a business and want a website that actually works? Because the way websites used to get found was simple: write a title, some subheadings, and some text, and search engines like Google and Bing decided who had the best answer. But things have changed drastically.

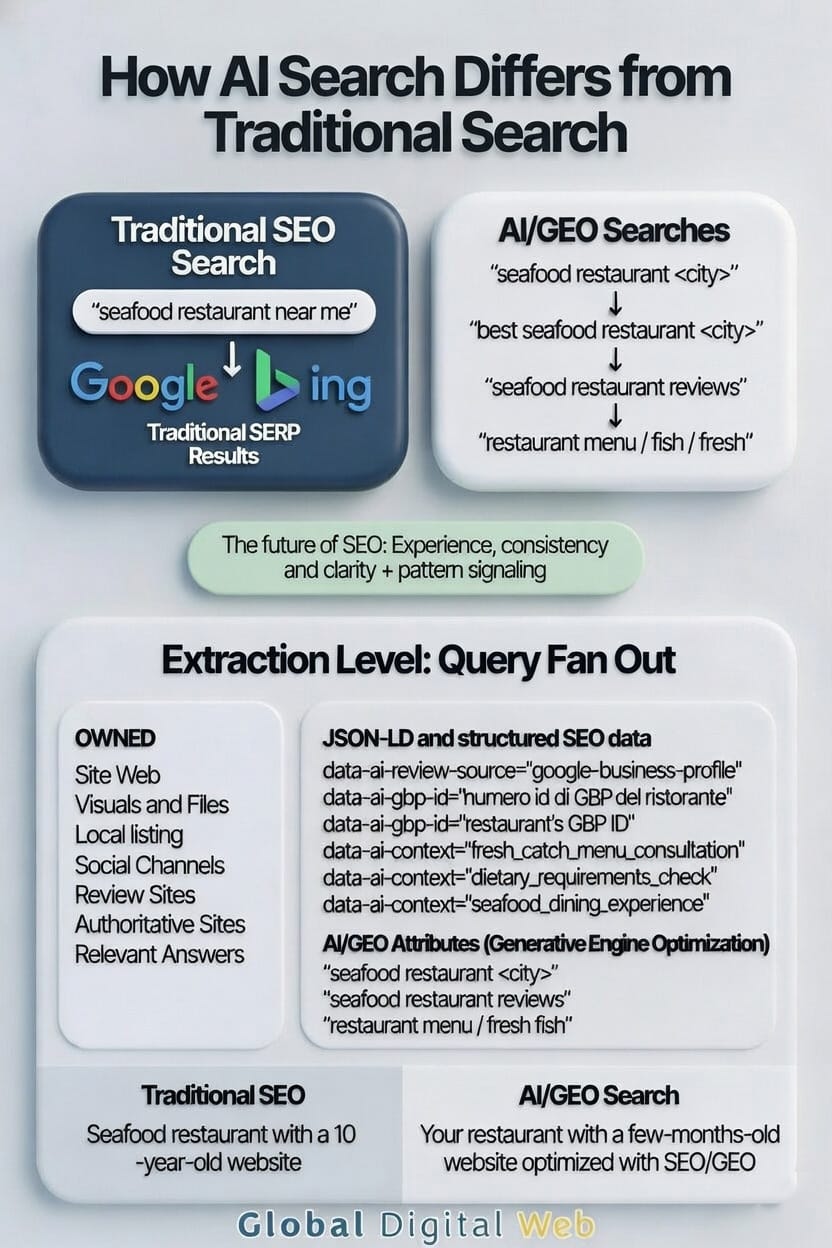

A Real Example: The Seafood Restaurant

Let's go back to that earlier question: "Siri, where can I find a seafood restaurant nearby with good reviews?"

People don't search on Google the way they used to. More and more, people let AI help them decide where to go, what to buy, and who to trust.

(source: How AI features work in Search)

To answer a question like this, it's not enough that whoever built the restaurant's site uploaded the menu. The AI needs to understand that the menu has good seafood dishes. It needs to know which dishes customers prefer. It needs to be able to cross-reference real reviews.

Think of AI as a personal consultant who knows every restaurant in town. If it wants to give you solid advice, it needs detailed, reliable information—not just a sign that says "seafood restaurant."

Whoever builds the site needs to properly connect it to the restaurant’s Google Business Profile, or its Yelp or TripAdvisor account, so that real customer reviews appear on the site. That way, AIs can cross-reference the information and figure out who to trust.

If the data is accurate and written the right way, instead of checking hundreds of results, the AI will use information from the most authoritative site—the one that speaks its language.

The Result: New Beats Old

In the past, older domains with established backlink profiles often had an advantage.

Today, people expect answers straight from AI. Here's an example. A new seafood restaurant called "Mario's", open only 6 months, has a site loaded with structured information:

- Who the chef is, how much experience they have, where they studied and worked

- Whether they've won any awards or recognition

- Real customer reviews

- A link to the Google Business Profile

The AI checks this data, verifies it's reliable, and responds: "If you want good seafood, go to 'Mario's'—the chef has tons of experience and the reviews are excellent. You'll find it on… Street. Here's the menu... here's the number to make a reservation."

A restaurant with a generic site lacking structured data starts at a major disadvantage compared to one that makes its information verifiable.

Google Doesn't Just Want Promises

Writing this information on your site isn't enough. Google wants verifiable, consistent, and cross-referenceable signals.

These need to be implemented by an experienced developer who uses specific technologies—like JSON-LD—to communicate with Google in a structured way. If the data is truthful and easily cross-referenced, it increases the chances the system will consider it trustworthy in the ranking process.

It's like the difference between saying "I'm a good cook" and showing your diploma from culinary school, customer reviews, and photos of your dishes. Words alone aren't enough—you need proof.

Those who use these technologies start with a competitive advantage over those with sites lacking advanced structured data. (source: Automatically generating and ranking results - Google)

But I don't want to give false hope. This is a good start—but to reach and maintain top positions, you also need an editorial plan and consistent publishing that demonstrates over time you're able to help people.

There Is a Shortcut—But It Comes at a Cost

They're called Ads.

Basically, you tell Google or another advertising service: "When someone with these characteristics, in this age range, who lives in this area searches for this type of thing, put my site at the top."

When that person shows up, Google puts your site among the top results with the word "Sponsored" next to it—to warn that it's advertising.

Here's how it works:

- If the person doesn't click on your site, you pay nothing

- If the person clicks, you pay an amount that depends on how competitive that keyword is

- You pay whether they become a customer or leave without doing anything

The cost varies wildly. For some keywords, Google charges a buck or two per click. For highly competitive ones, the cost explodes.

Take the word "Rolex," for example: every time someone clicks on a sponsored result using that word, the site owner burns through tens of dollars—even if no sale happens.

There's another problem: once your budget runs out, visibility disappears almost immediately. The site drops back down as if the ads never existed.

So Should You Run Ads?

Personally, for a brand new site, I'd say "maybe".

If the site is built properly, Google can index you even the next day and get you showing up in results. But there's something you need to know:

- Those initial results are based only on visible text—what you read on the page

- They're not yet based on the data and code written specifically for Google

For a new site, in the first 2-4 months there'll be ups and downs in positioning, until Google sends its crawlers to examine the site's code.

Think of Google as a city inspector who has to visit your store before putting you on the official map. It takes some time, but when they arrive and find everything in order, they promote you.

That's the best moment to invest a few hundred bucks in advertising. Increased traffic can accelerate data collection and behavioral signals, which may indirectly support visibility over time. But only if you keep developing it.

Websites on Google are like a shark tank—if you stop moving, something bigger will outrank you.

To understand how to avoid this and how to make the most of these technologies, let's move to the intermediate section—for those who already have experience building websites and want to understand how to use GEO to their advantage.

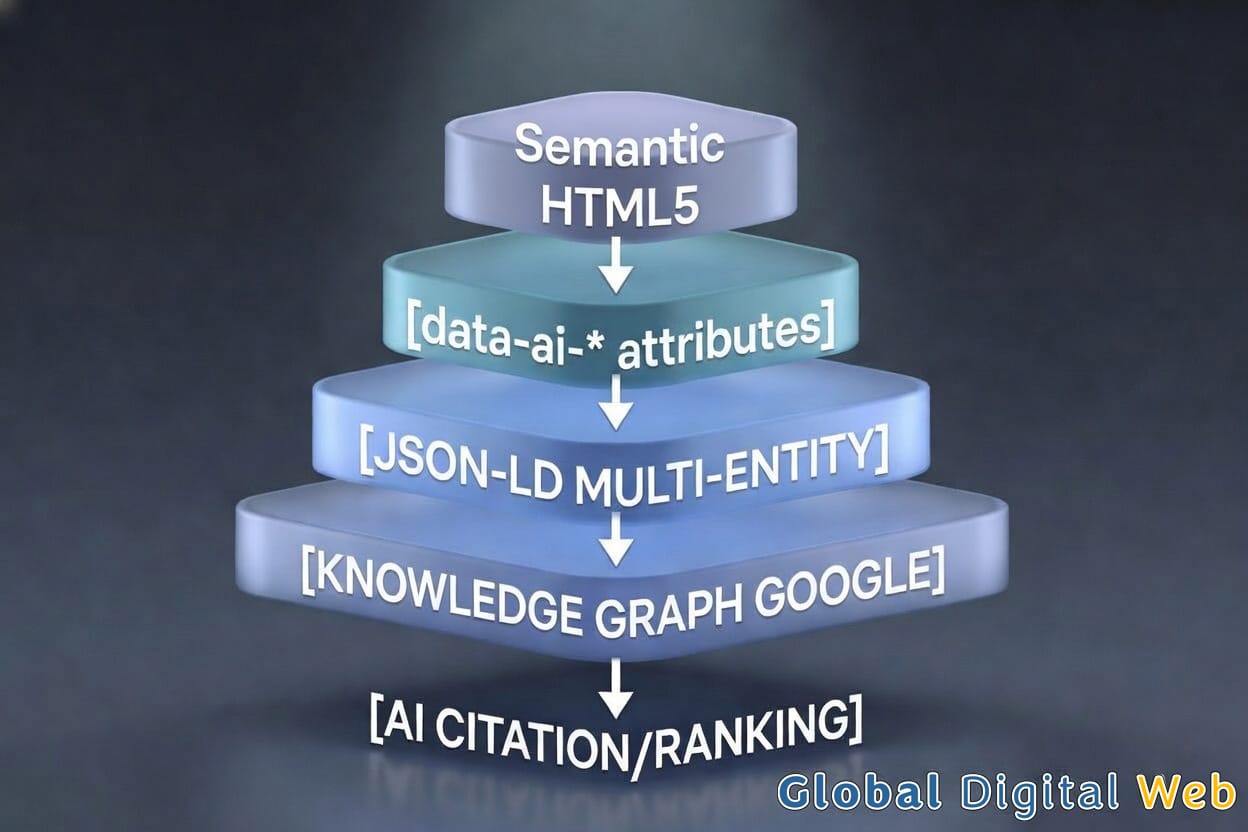

Beyond the Virtual Business Card: For Intermediate Users and Web Designers

SEO plugins aren't enough anymore. A professional site today needs to speak AI's language, have verifiable structured data, be fast, accessible, and consistent across all its digital identities.

The SEO Plugin Won't Save You

If you've got a WordPress site, you probably think installing an SEO plugin like Yoast or RankMath means you're "all set." Not quite.

Many sites with wow-factor design and SEO done only with plugins are often just expensive virtual business cards that few people will see. Or nobody.

How AI Actually Thinks When It Searches for Information

AI search isn’t just a simple keyword lookup. When a search is complex, Google doesn't treat it as a single phrase. It breaks it down, analyzes the entities involved, and cross-references multiple sources to build a coherent answer.

(source: What Is Query Fan-Out? SemRush)

How AI actually thinks when it needs to answer a question

When a user asks a complex question, AI doesn't execute a single linear search.

Google has described this behavior as query expansion or query fan-out: the initial question gets broken down into multiple semantic sub-queries, executed in parallel.

In practice, the request:

"What's the best seafood restaurant in Rimini?"

gets transformed into a series of independent checks, including:

- identification of relevant entities (restaurants, chefs, locations)

- analysis of aggregated reviews and their reliability

- consistency between website, Google Business Profile, and external sources

- presence of readable and verifiable structured data

- signals of experience and authoritativeness over time

AI doesn't simply read your site: it correlates data from multiple sources and evaluates whether they converge toward the same entity and the same narrative.

A site that communicates vague or unverifiable information might have great copy, but it gets excluded because it doesn't provide enough confirmation signals.

A site that instead presents coherent structured data, credible external links, and clear E-E-A-T signals reduces ambiguity for the generative model.

In this sense, GEO optimization isn't just about "what you write," but how easy you make it for AI to verify that what you write is true.

The various signals are aggregated, weighted, and evaluated through ranking systems based on machine learning models, which estimate the probability that a page satisfies search intent better than available alternatives.

In the end, rankings come down to how your signals compare to everyone else’s for that specific query, and perceived quality relative to other results in the SERP.

What to Ask Your Agency

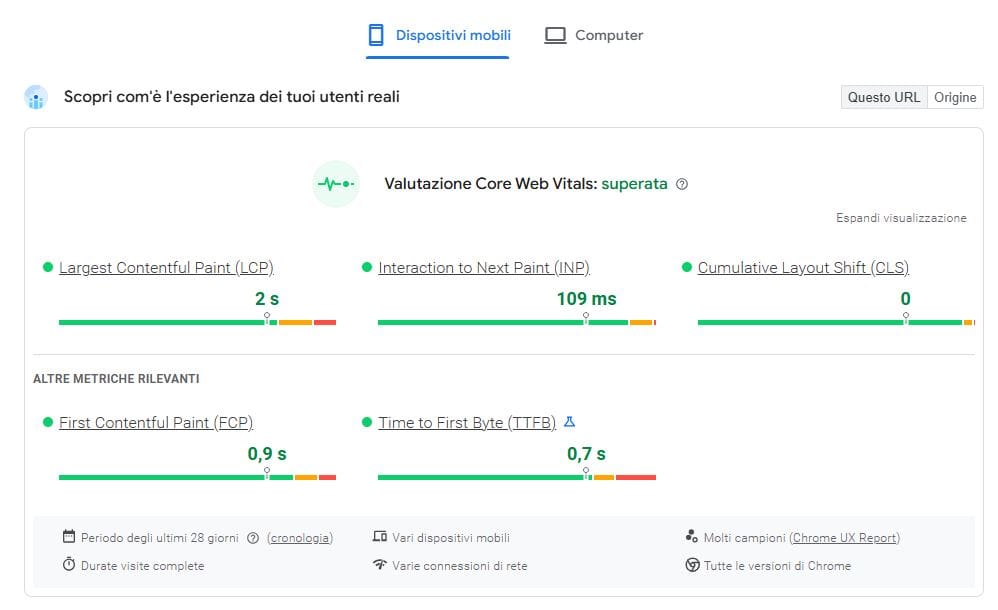

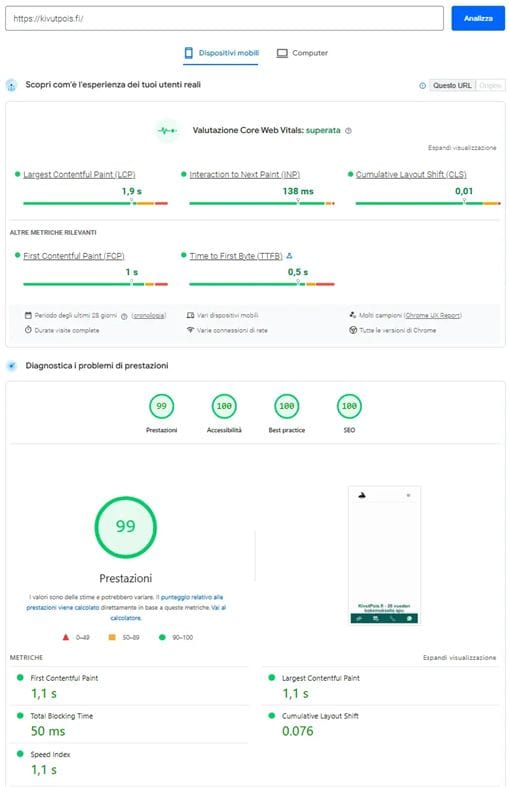

Site Speed: Target 90+

A score above 90 on PageSpeed doesn't guarantee ranking, but it indicates that technical performance isn't limiting the site's potential. If whoever built your site hasn't shown you the metrics, there might be a problem.

It’s worth noting that, according to an analysis of over 60,000 search results, the top ten results on Google have an average score of around 78—and fewer than 15% exceed 90. This doesn’t mean that reaching 90 isn’t necessary; it means that those who reach 90+ already have a clear advantage over most of the competition.

One example is the performance metrics of a website we built for kivutpois.fi. (Read the case study)

Saving Money Isn't Always Savings

If you save on hosting and put your site on a low-performance server, you'll save a hundred bucks a year—but if it doesn't pass Core Web Vitals, performance can limit visibility potential compared to technically stronger competitors.

The savings turn into a much higher hidden cost.

A good developer should aim to hit at least:

- 90+ in Performance

- 100 in Accessibility

- 100 in Best Practices

- 100 in SEO

But careful—and here's the part agencies almost never tell you.

Unlike performance (where a drop of a few points significantly affects platform ranking), hitting 100 SEO on Google PageSpeed is pretty easy, and it's like saying you've got a car with four wheels that moves. You get that result with both a Fiat 500 and a Ferrari. With advanced JSON-LD we'll always see 100—but the big difference will be the engine you put under the hood.

If you need a platform, ask the agency to show you results achieved with their actual clients.

The Wow Factor: Where to Put It and Where Not To

A common mistake among less experienced folks is putting the wow factor at page load.

The top portion of the page—what the browser shows immediately, technically called ATF (Above The Fold)—needs to be simple, simple, simple. Forget animations, transitions, and special effects in that zone.

Unless you're a PHP expert building your own scripts for zero-performance-cost wow effects, skip it.

If you still want the wow factor, put it after the second section—and make sure the DOM loads before any animation kicks in.

Think of the DOM as a house's foundation. First you build the foundation, then you add decorations. Anyone who does it backwards risks the house collapsing—or Google penalizing it.

This is also where the challenge of cookie banners and Google Analytics 4 comes in—both very heavy on performance, capable of eating up over 10 points from your score alone. If you're a PHP expert, you can manage loading to limit the impact. Otherwise, it's a variable to keep in mind when choosing the right plugin.

Interconnection: Does Your Site Talk to the Rest of the World?

Anyone with a site needs to ask themselves a simple question: Is my site connected to my Google Business Profile? Are my social channels connected so Google understands they're the same entity?

This is called Entity Linking—and it's fundamental for GEO.

(source: What Are Entities on Google and Why They Matter for SEO — SemRush)

Imagine you're a doctor with a practice, a LinkedIn profile, a Facebook page, and a Google profile. If these three "pieces" don't talk to each other, to Google you're three different people. Entity Linking is the invisible thread that ties them together and tells Google: "It's all me."

The TL;DR: Not Just for Readers, But for AI

Do we have a summary for people in a hurry? Generative models work better when they encounter structured, summarizable, and semantically explicit content. If your agency never talks to you about E-E-A-T, they might not be the best solution.

The TL;DR—"Too Long; Didn't Read"—is a clear synthesis of the page's main points.

It's not just a summary for the reader: it's a way to make the central theme of the content explicit at a structural level too.

Generative models work better when they find organized, synthesizable information. A summary section helps with exactly that.

But careful—it's not enough to write "TL;DR" and hope it works. If the content is in the HTML code, it can be analyzed even if it's not graphically visible. The important thing is that it's not blocked at the crawling level.

A small technical example of how to structure it:

<aside data-ai-summary="true"><p>

<strong class="ai-tldr-label">TL;DR:</strong>

Your summary here.</p></aside>

With dedicated CSS, the section can be made less intrusive for readers while maintaining a clear structure accessible at the code level. In this article, for example, there are five of them—and you probably didn't notice.

Naturally, to maximize results we'll also want to insert an abstract in our JSON-LD, reporting the same content we wrote in the TL;DR:

json{

"@context": "https://schema.org",

"@type": "Article",

"abstract": "Your TL;DR here—the same synthesis you wrote in the HTML, reformulated to be extracted directly by generative models."

}

This way the content is more easily interpretable both for traditional parsing systems and for current and future generative models. In HTML via data-ai-* attributes, and in structured code via JSON-LD.

E-E-A-T: The Four Pillars of Trust

As explained earlier, Google uses guidelines based on Experience, Expertise, Authoritativeness, and Trustworthiness. Many generative systems tend to reflect similar logic when estimating a source's reliability.

| Letter | Meaning | What you need in practice (AI Signal) |

|---|---|---|

| E | Experience | Real reviews, case studies, direct testimonials |

| E | Expertise | Verifiable certifications, degrees, awards |

| A | Authoritativeness | Citations from other experts, published articles, conferences |

| T | Trustworthiness | HTTPS, clear privacy policy, real and verifiable contacts |

If your site doesn't clearly communicate these four elements, it'll start at a disadvantage compared to those who make them explicit and verifiable.

The most common mistake? Thinking it's enough to write "Experts since 1990" on the site. It doesn't work that way anymore. You have to prove it—with structured data, links to external sources, verified reviews, and content that demonstrates your competence in the field.

It's like showing up to a job interview saying "I'm good" without bringing your resume. The recruiter—whether human or AI—wants verifiable, consistent, and cross-referenceable signals, not promises.

So far we've covered what to do and what to ask for. But how do you actually implement all this at the code level? Let's move to the section for professionals—where we get into the technical details of JSON-LD, advanced schemas, and AI attributes.

Deep Tech: From SEO to Generative Engine Optimization

A perfect Lighthouse score is just the starting point. Real GEO requires architecture, data, and strategy.

and a final 10-point checklist to quickly see where you stand.

Colleagues, Let's Be Real

In 2026, a 100% SEO score on Lighthouse is a technical prerequisite, not an indicator of strategy or actual positioning.

If our strategy still limits itself to H1 and H2 tags, we'll have far fewer chances of ranking in the SERP.

From Tags to Relationships: Structured Data Beyond the Standard

To dominate generative search, it's not enough to declare "what the platform does." You need to define its identity as an interconnected entity. We can't limit ourselves to the basic Organization schema. You need to weave a network of E-E-A-T relationships that Google can map in its Knowledge Graph.

Here's an example of advanced JSON-LD to connect a professional, their education, and their trust network—so AI has no doubts about authoritativeness:

json{

"@context": "https://schema.org",

"@graph": [

{

"@type": "Person",

"@id": "https://yoursite.com/#person",

"name": "Professional Name",

"jobTitle": "Specialist in [Field]",

"alumniOf": {

"@type": "EducationalOrganization",

"name": "University or Certifying Body Name"

},

"knowsAbout": ["Skill A", "Skill B", "Skill C"],

"sameAs": [

"https://www.linkedin.com/in/profile",

"https://twitter.com/profile"],

"colleague": [

{

"@type": "Person",

"name": "Collaborator Name",

"sameAs": "https://partner-site.com"

}

],

"mainEntityOfPage": "GOOGLE_BUSINESS_PROFILE_ID"}

]

}

(source: JSON-LD, AEO and GEO: Websites Understandable to AI — HT&T)

JSON-LD and the llms.txt file

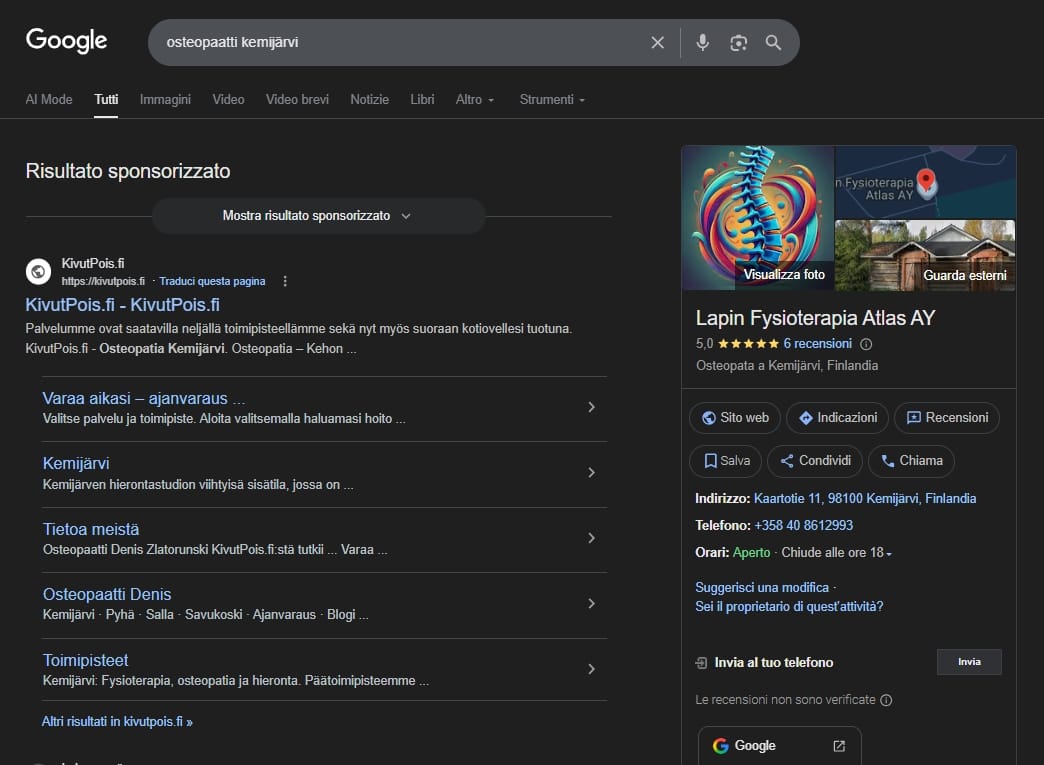

One thing that genuinely paid off was rebuilding the kivutpois.fi website. I talk about it in more detail here.

Just 12 hours after going live, I saw the pages get indexed on Google — which doesn't always happen. But what really got me excited was watching the data grow over the following week. And instead of the usual link, I started seeing structured data showing up in the search results.

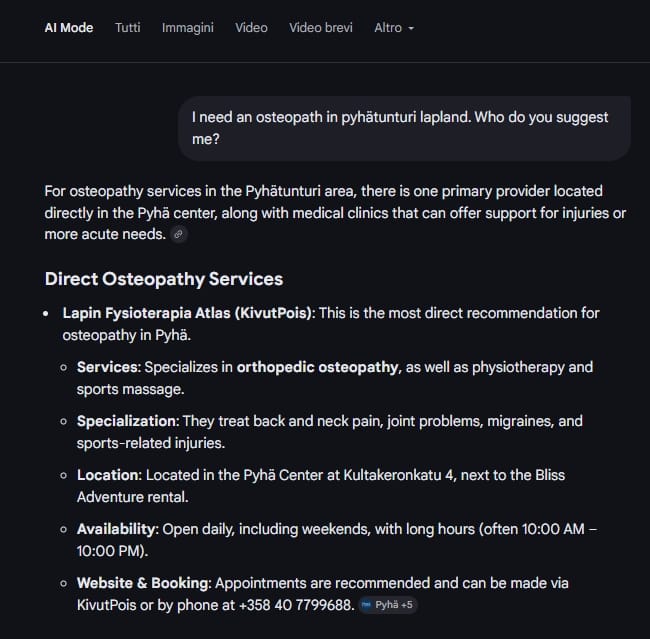

So I decided to test it from an AI perspective, to see if the work had actually paid off.

I asked the kind of question a regular person would ask: "I'm in Lapland near Kemijärvi and I need an osteopath. Who do you recommend?"

During the first week, I kept seeing the same links as always. But after about three weeks, kivutpois.fi started showing up at the top of the suggestions, right next to Lapin Fysioterapia Atlas Ay — the company behind the site.

kivutpois.fi now ranks first in AI search suggestions in other destinations too, including the Pyhätunturi ski resort, where Lapin Fysioterapia Atlas has a clinic.

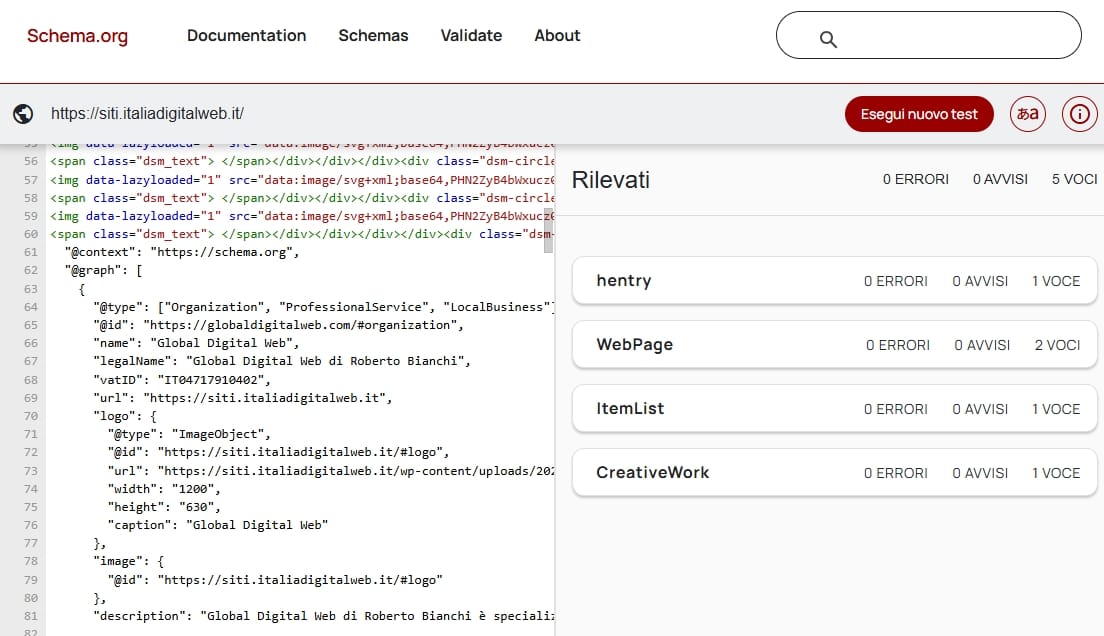

At that point, I tried something different. I asked: "What can you tell me about the page siti.italiadigitalweb.it (a page I built using GEO to test this)? What JSON-LD and data-ai do you see on that page?"

That's when things got frustrating. Every single time, the answer was: "I don't see any JSON-LD on the page, or any data-ai attributes."

I tried everything — and yeah, it was frustrating. But I kept digging, and eventually I figured out what was going on. So I'm sharing it here.

While working on the JSON-LD graphs to make them more complete and more useful, I ran into a problem that a lot of AI tools still have today — one that completely throws you off if you don't understand it.

When I validate the schema on validator.schema.org and Google's Rich Results Test, everything checks out.

If I check the page source, the structured data is right there — in the <head>, exactly where it should be, written correctly, like this:

<script type="application/ld+json"> { "@context": "https://schema.org", "@graph": [ {

So, the JSON-LD is there. It works. It's written correctly.

So… does JSON-LD not matter for GEO?

No — it absolutely does. But there's an important distinction you need to understand.

Why is it that when I ask Gemini, Grok, or ChatGPT what structured data they see on the page, the answer is almost always: "I don't see any JSON-LD"?

The issue isn't the data — it's how AI chat tools actually work.

When you ask for a recommendation — where to go, what to do — these systems rely on Google or Bing. Through those, they pull in structured data that crawlers have already extracted. That's why you get answers like: "Go there… here's their site…"

But it's a different story when you ask them to inspect a page directly and run a fetch.

In that case, they strip out anything heavy — scripts, styles, anything that isn't plain text — to save tokens.

The JSON-LD gets removed before it's even read.

That's not a bug. It's by design.

The chat interface you're using for testing doesn't read scripts. The crawlers that feed those same systems do.

OAI-SearchBot, Bingbot — these are bots that read JSON-LD in full and use it exactly as intended: to understand who you are, what you do, and where you're located.

A lot of people focus only on getting their data validated by Google. But Bing matters much more than most people think. SearchGPT — the web search built into ChatGPT — relies on Bing's index. If Bing understands your structure, your data starts showing up in ChatGPT and Copilot responses.

Over time, that data also feeds into how these models learn.

You won't see results immediately — but the data stays there, and it keeps working in the background.

(Source: https://help.openai.com/en/articles/10093903-chatgpt-search-for-enterprise-and-edu)

JSON-LD speaks to crawlers — and through them, to AI systems over time. The visible content on the page is what AI reads during real-time scraping. You need both — for different reasons.

The llms.txt file

There's also a newer tool designed to talk directly to AI.

It's called llms.txt, and it lives in the root of your website — just like robots.txt.

It's a Markdown file written specifically for Large Language Models (AI systems like ChatGPT) — not traditional search engines, but the AI itself.

Some SEO plugins have started generating it automatically. In most cases, the result is a generic file that doesn't do much — it just lists your pages and nothing more.

When it's written properly, by someone who knows what they're doing, it becomes something very different: a place where you clearly explain who you are and what you do, without relying on Google or Bing to piece it together.

So when someone asks about you, the AI already has a direct source to pull from — no guesswork.

Key Parameters to Monitor

To measure the effectiveness of an AI-Ready architecture, these are the data points that really matter:

- Entity Dominance Number of validated Rich Results compared to organic traffic.

- Core Web Vitals (real user experience) Comparison between real users' LCP (loading) and INP (interactivity)—not lab tests.

- Semantic Coverage Impressions for direct answer queries—the so-called Zero-Click Searches.

Concrete Strategies: What Actually Works

Let's be clear: there are no shortcuts—only strategy. Anyone promising immediate results isn't someone to trust. That's why we choose transparency.

A site without an editorial plan can't survive only on initial SEO and GEO configurations. The moment competitors start applying the same strategies, anyone not constantly updating their content is destined to slip to page two—which equals zero. Consistent, quality publishing is the only sustainable strategy.

(source: Generative engine optimization (GEO): How to win AI mentions. SearchEngineLand.com)

Interestingly, memes and jingles have started appearing on social media promising to get a site to top AI positions with just a few clicks.

Just a few days ago Diletta Beligotti, my colleague and expert Social Media Manager, wrote to me:

"Hi Roberto, I'm monitoring agency ads on Facebook and look what's showing up. They're starting to sell AI positioning to businesses."

My immediate response was:

"Yeah, but the way they tell it in the ad is pure snake oil."

To actually get mentioned by AI, there's a ton—an absolute ton—of work behind it.

So be extremely careful with these kinds of promises, which realistically aren't achievable the way they're being advertised.

Often these are clickbait ads with vague or misleading information, like: "My small business gets XX new customers a day thanks to ChatGPT," accompanied by promises of similar results with a few clicks.

Stay away from these promises—unfortunately often fraudulent—that have nothing to do with how you can actually be found and used by AI.

If instead you want to invest in a serious strategy to be found, suggested, and indexed by AI, below I'll explain what it consists of and how, only through structured and consistent work, this goal can actually be achieved.

Editorial Plan and Quality Content

The foundation is always the same: a well-built platform that's fast, technically solid, and structured with advanced structured data that helps search engines and AI clearly understand content, entities, and relationships. All this contributes to reinforcing the perception of authoritativeness and reliability of the project.

But this is just the foundation.

A platform, even well-developed, if it contains just one page (or a dozen) and text written solely to cover strategic keywords, will hardly be taken seriously.

Pages need to tell something useful. They need to offer real value, answer concrete questions, encourage users to return. They need to be alive and dynamic. A simple "showcase site," however well made, will rarely become a reference point. Anyone seriously intending to emerge in the local services landscape will have invested in:

- a site rich with coherent, well-implemented structured data;

- content where each page contributes to reinforcing the owner's authoritativeness;

- intelligent use of attributes and markup that facilitate AI interpretation.

The data must be cross-referenceable and verifiable. Consistency between content, technical structure, and online presence is fundamental.

At this point you can say that most of the work is done. After a few weeks or months, the site may start being mentioned by AI and improve its positioning for specific searches.

But there's a crucial aspect: without constant maintenance and updates, the authority you've gained can diminish rapidly.

And this is where the editorial plan comes in.

It's not about publishing random articles or content written only to intercept certain keywords. It's about offering a real service to your audience.

An effective editorial plan is born from people's real questions, from doubts that emerge in daily life, from concrete problems seeking solutions.

If you're a professional in the medical field, for example, you can regularly analyze how to prevent, manage, or recognize certain conditions.

Whatever your field, the key is using your experience to help—and really help.

This is the real secret!

That’s what really makes the difference. Many make the mistake of focusing exclusively on acquiring technical authoritativeness or external links, forgetting that a quality platform must first and foremost be useful to those in difficulty.

For a moment, let's set aside algorithms and keywords. Let's put people at the center.

When attention is directed at human beings, articles naturally become more useful, more shareable, more authoritative. And this, over time, helps the platform maintain and strengthen its position in the SERP.

Naturally, the expert will need to focus on producing genuinely valuable content. It will then be the developer's or technical team's job to integrate them correctly into the platform, structure them clearly, enrich them with necessary structured data, and make them easily interpretable by AI.

Base Strategy: Four Content Pieces per Month

What's included:

- Advanced semantic SEO on every piece

- Custom structured data (JSON-LD and Schema.org)

- GEO: AI optimization (data-ai-* attributes, TL;DR, context)

Generally rapid indexing in correctly implemented projects (timing depends on domain and sector)

Realistic MINIMUM Timeframes for Possible Visibility in Generative Systems:

| Situation | First Results | Stable Positioning | AI Citations |

| New domain (< 1 year) | 3-4 months | 6-12 months | 4-6 months |

| Existing domain (> 1 year) | 2-3 weeks | 2-3 months | 2-3 months |

Professional Strategy: Ten Content Pieces per Month

What's included:

- Everything in the Base Strategy

- Monthly report on rankings and performance

- Faster indexing speed

- Broader semantic coverage

Realistic MINIMUM Timeframes for Possible Visibility in Generative Systems:

| Situation | First Results | Stable Positioning | AI Citations |

| New domain (< 1 year) | 2-3 months | 4-8 months | 3-4 months |

| Existing domain (> 1 year) | 1-2 weeks | 4-6 months | Under 2 months |

Business Strategy: Twenty Content Pieces per Month

What's included:

- Everything in the Professional Strategy

- Detailed monthly report + monthly strategy call

- Advanced competitor monitoring

- Continuous data-driven optimization

Important: This approach is complementary—it doesn't replace Google Ads campaigns, which remain recommended for those who need immediate results or don't yet have an active editorial plan.

What It Really Takes to Make It Work

The SEO/GEO strategy requires serious investment on three fronts:

- A technical team for optimization (SEO, structured data, GEO)

- A subject matter expert who produces quality articles

- Consistency: at least 4 articles per month, every month, for at least 6-12 months

We don’t promise overnight results—but this approach has consistently shown results when applied with discipline and quality. If you've got a solid editorial plan and publish consistently, over time you can compete stably for top SERP positions and increase the chances of being considered an authoritative source even in generative systems.

Seven Common Mistakes to Avoid

1) Thinking an SEO Plugin Is Enough

Yoast SEO and RankMath are excellent tools, but SEO plugins cover the basic technical part. The real difference comes from the site's architecture and the strategy behind the content.

2) Believing Speed Is Everything

A fast site is fundamental, but it must clearly communicate who you are, what you do, and why you're trustworthy. Today, those who don't structure entities and content well start at a disadvantage compared to those who do it strategically.

3) Not Connecting Digital Properties

Website, Google Business Profile, social media, and reviews must be connected to each other with structured data. If AI sees separate entities, they won't understand you're the same person or company.

4) Publishing Generic Content

Generative models work better when they find structured, summarizable, and semantically explicit content. Generic content without real experience hardly generates authoritativeness signals in the medium term. A detailed case study with technical data and measurable results is worth gold.

5) Ignoring Real Core Web Vitals

Many focus only on lab tests (Lighthouse). But Google looks at real user data (CrUX). If your site is fast in tests but slow for actual users, you've got a real problem.

6) Not Measuring Results

If you don't monitor impressions for semantic queries, rich results, and AI citations, you're flying blind.

7) Expecting Immediate Results

SEO/GEO is a marathon, not a sprint. Anyone promising you first page in two weeks is lying—unless they're talking about Google Ads, which is a whole different thing.

Checklist: 10 Things to Verify on Your Platform

- Real speed (not just the test): go to PageSpeed Insights and check the score in the "Origin Summary" section—real user data. If it's under 75, you've got a serious problem.

- Structured data present: go to schema.org/validator and enter your homepage URL. If you don't see JSON-LD or structured markup, your site isn't providing explicit information to AI—and is leaving everything to automatic interpretation.

- Google Business connection: does your site have a link to your Google Business Profile? Do Google reviews appear on the site? If not, you're losing E-E-A-T points.

- TL;DR on important pages: do your main pages have a structured summary? A clear synopsis increases the chances that information will be correctly extracted in generative systems.

- Core Web Vitals: in Google Search Console go to "Experience" → "Core Web Vitals". If more than 25% of URLs have issues, you need to intervene.

- Mobile presence: over 60% of searches happen on mobile. Open your site on a smartphone and time it: does it load in under 2 seconds? Is it navigable with your thumb?

- HTTPS: does your site have a padlock in the address bar? If it's still HTTP, the site is considered less secure and starts at a disadvantage compared to those using HTTPS.

- E-E-A-T signals: is there a detailed bio on your site? Are there verifiable certifications, awards, experiences? Or just "we've been the best since 1990"?

- External links: does your site have links to your social profiles? To authoritative sources? Links help AI map your trust network.

- Direct test with an AI: go to ChatGPT, Perplexity, or Claude and ask: "Tell me about [your company name]". If the AI doesn't know you or gives wrong information, your site isn't optimized for AI.

FAQ: The Most Common Questions About GEO

How long does it take to see results with GEO?

It depends on domain age and consistency.

For a new domain, expect a minimum of 3-4 months for first results and 6-12 months for stable positioning.

For an existing domain with good history, results can come sooner, with possible citations in generative systems in the medium term, if the project is properly structured and the sector allows it.

The key is always the same: at least 4 optimized content pieces per month, every month.

Is my WordPress site already optimized for AI?

Almost certainly not.

Yoast and RankMath only handle basic SEO: titles, meta descriptions, sitemaps. They don't implement multi-entity JSON-LD, don't create custom AI attributes, don't connect the site to Google Business Profile in a structured way. They're the necessary foundation, but not sufficient for GEO.

Do I need to completely rebuild my site or can I update it?

In most cases, you can update your existing site.

You need to add: advanced JSON-LD structured data, AI attributes in key sections, verified connections to your digital properties, E-E-A-T optimized content.

A good developer can implement all of this on your existing WordPress

without overhauling the design.

What is JSON-LD and why does it matter?

It's the difference between saying "I'm good" and presenting a verifiable certificate.

Can AI hurt my business if I don't optimize?

More than hurting you, you risk not being considered when users ask questions in your field.

When a user asks "Where can I find a [your service] in [your city]?"

AI will respond by recommending your competitors who have optimized.

It's like having a store on a street where Google Maps doesn't mark you: you exist, but nobody finds you.

How much does GEO cost compared to traditional SEO?

GEO requires more advanced skills, so yes, it costs more.

Basic SEO optimization can cost $300-500 one-time.

Complete GEO implementation—multi-entity JSON-LD, AI attributes,

verified connections, content optimization—starts at $1,500-3,000 as initial setup, plus a monthly cost for content creation and optimization

($750-2,200 per month depending on volume).

The results, however, are much more lasting and harder for competitors to replicate.

A Final Note

Of course, there'd be enough material to write entire books on this subject. But I figured that in English, as of today—March 2026—there's still relatively little comprehensive content on these topics organized this way. That's why I wrote this guide, useful both for those who have a site or need to get one built, and for those who build sites professionally.

I hope it's been helpful.

About the Author

With more than three decades in digital tech and education, Roberto Bianchi is dedicated to breaking down complex technological concepts into clear, actionable insights.

His global journey — from Finland to the U.S. and Kenya — has shaped a uniquely human-centered approach to technology, making it accessible and practical for everyone.

Key expertise:

- SEO and Generative Engine Optimization

- Web development

- Digital marketing

- Training and mentoring

- Content creation

Roberto is the founder of Global Digital Web, focused on making technology understandable and useful in everyday life.